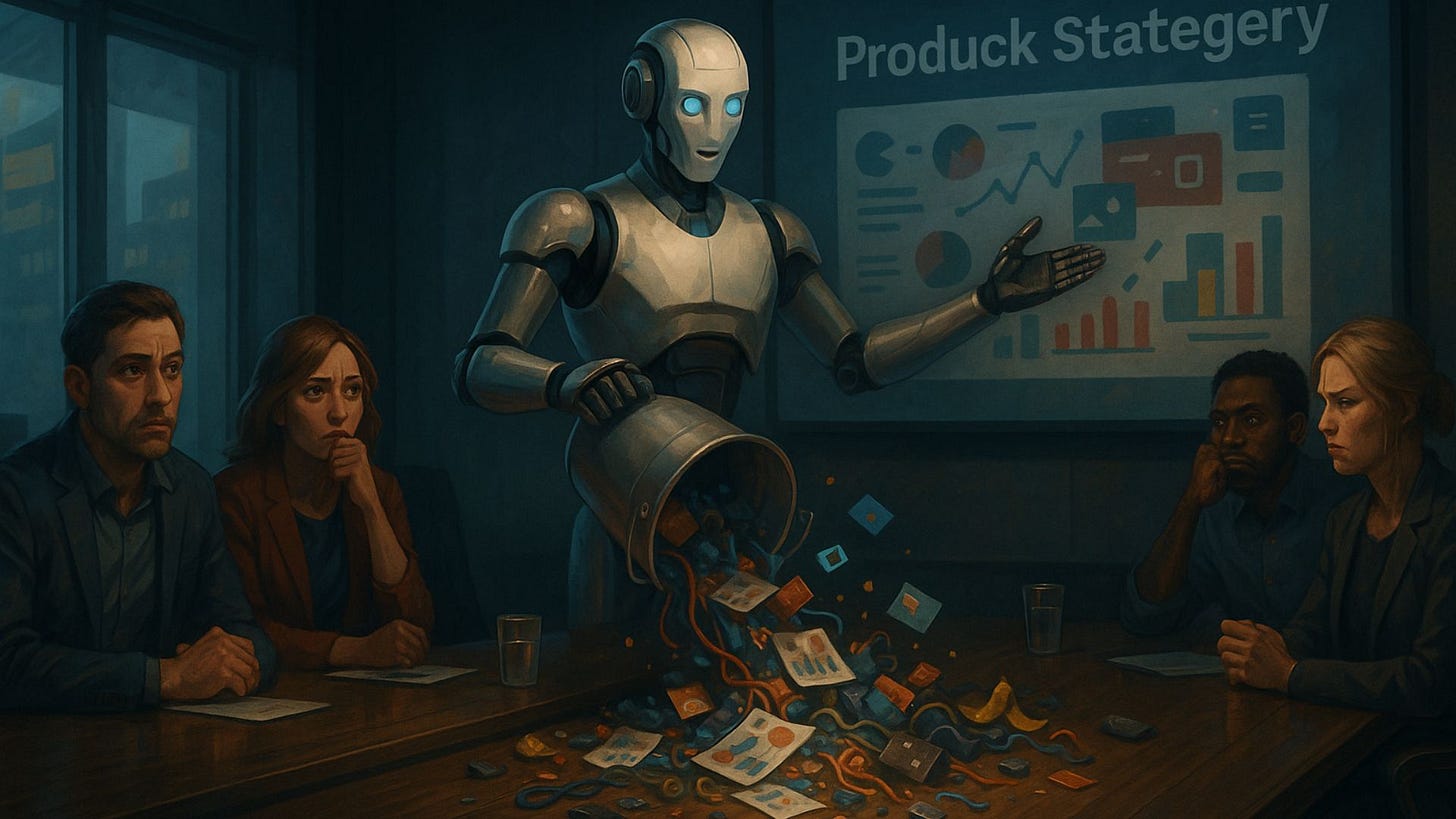

The AI Agent Illusion

We're Scrambling to Replace 100% of a Human With 2.5% AI Capability

If someone offered you two pennies in exchange for a dollar, would you take it?

If it were one of my kids, I’d probably do it just to see them smile. However, as a general practice, I’d be a fool to make that trade. And yet, according to some new research from RemoteLabor.ai, that’s exactly what many organizations are doing right now when they do a hot swap of humans for AI agents. That’s right, at best, it would appear AI agents perform about 2.5% as effectively as a person.

As you can imagine, the headlines and newsfeeds picked up on that number quickly, and for good reason. It’s catchy. However, after digging into the study, I found it tells a much bigger story. What I found was the research doesn’t prove AI agents are useless or that automation should stop. It does, however, expose just how much of the current hype cycle is built on smoke, mirrors, and wishful thinking. For tech companies making impossible promises, it’s a damning blow to the narrative that “AI will replace all human labor,” which is a good thing. Perhaps it can slow the layoffs heavily influenced by the belief it can.

In this week’s episode of Future-Focused, I dove deeper into that research and what leaders and professionals should be doing in response, which you can find on Spotify, YouTube, or wherever you listen to podcasts. However, after recording the episode, I couldn’t stop thinking about the patterns behind all of it because this isn’t the first time we’ve fallen for a shiny promise wrapped in technological progress. And, if we’re not careful, it won’t be the last.

So in this reflection, I want to examine why we keep falling for this illusion and what it will take to finally stop repeating the same mistake.

With that, let’s get into it!

Key Reflections

What Works in Theory Rarely Survives Reality

“Benchmarks don’t lead teams, adapt mid-project, or navigate chaos; people do.”

On paper, AI looks perfect. I know because I help craft the product pitches and innovation decks that make it look that way. The time and effort that goes into designing for the appearance of perfection is staggering. And even then, it still doesn’t perform the way it’s supposed to once it enters the real world. That’s uncomfortable to admit, but I don’t hate it. There’s beauty in the creative optimism of innovation. It inspires us, and we need inspiration. However, inspiration without awareness is dangerous. We all need to recognize there’s a gap between what we imagine and what actually happens, and this new research is an uncomfortable reminder that we’ve let that gap grow a little too wide.

Now, that gap is way more than an AI issue; it’s a leadership one. It shows up in every careless executive mandate, unexpected layoff, or transformation plan that falls flat despite all the warnings. It’s a pattern that plays out when we fall a little too in love with potential and forget how messy progress actually is. That’s why awareness and calibration are core leadership disciplines and why we all need to surround ourselves with people who can pull us out of the dream before we crash into the ground. It’s why I’ve made it such a big part of the work I do, because people need help closing the gap between promise and performance.

So, before you chase the next big promise, make sure you’re objectively examining if it can hold up in the real world.

We Grossly Undervalue the Human Variable

“The ‘flaws’ we keep trying to engineer out of people are the same traits that keep everything running.”

What struck me was all the people mocking AI for failing at something we rarely value enough in humans. By default, AI requires details and context most humans never get. People often have no choice but to operate in uncertainty and ambiguity while being expected to figure it all out and deliver a 10/10. And then, even if they do, we still find ways to criticize them for being inconsistent or not following protocol. This study unintentionally revealed that we’ve forgotten human “imperfection” isn’t inefficiency; it’s adaptability. The inevitable messiness we constantly try to squeeze out of our systems is exactly what makes people irreplaceable.

I can promise you that no matter how far the technology advances, the vast majority of real work will continue to happen in the fray where priorities shift, information changes, and judgment calls have to be made. Try as we might, that’s not something we can automate away; it’s something we need to design around. Instead of trying to eliminate the volatility, leaders should plan for it and use AI to free people up from the repetition and mundane, so they can focus on it. Our goal shouldn’t be to replace the unpredictable; it should be to support it. Because when humans are focused on the complex, the contextual, and the creative, that’s when both people and technology perform at their best.

We don’t need systems that make people more predictable. We need systems that allow them to focus all their attention on the unpredictable.

Hard Stuff Is Where Real Value Lives

“Everyone wants transformation; nobody wants tension. But you can’t have one without the other.”

If we’re honest with ourselves, we love shortcuts, and I’m just as guilty as anyone else. We’re naturally drawn to things that promise results without resistance. Years ago, I got talked into trying a weight loss pill called Alli. The pitch was impressive. You could eat whatever you wanted and the fat just…passed right through you. And, well, it worked, just not in the way I expected. Let’s just say the side effects weren’t worth the alleged benefits. I laugh about it now, but it’s also a great metaphor. The pursuit of easy always comes with hidden costs. Our latest obsession with AI agents as a fast, cheap alternative to the hard work of running a business or leading people is just another version of that same temptation.

As for my health, today I take a very different approach. I work out daily and pay close attention to what I eat. It’s harder, takes consistency, and there’s nothing glamorous about it, but the payoff is real and lasting. The same principle applies in business. Optimization and automation have their place, but they shouldn’t be focused on eliminating the hard, meaningful work that actually creates value. For example, I recently worked with a leader whose teams were struggling to balance stakeholder relationships with the volume of demand for generating reports. We automated large parts of the report generation instead of the intake process, not because it was an easier path but a more impactful one.

The goal isn’t to avoid the strain that comes with doing what matters. It should be to keep growing strong enough to carry it well.

The Illusion Isn’t AI Agents; It’s Us

“We’re not just trying to make AI human. We’re trying to make it an extension of ourselves, because we believe life would be easier if everything thought like us.”

When I talk to people about AI, I often hear two sides of the spectrum. Some insist it will never be like us. Others are convinced it already is or soon will be. However, underneath both sits a less acknowledged desire. We don’t really want AI to be human; we want it to be a dystopian version of ourselves. We want technology that works the way we quietly wish other people did: predictable, efficient, responsive, and aligned with our preferences and pace. We want to bring to life a fantasy where everything thinks like we do. However, when we start designing technology to act as an extension of ourselves, we’re not creating intelligence; we’re creating an echo. And, echoes don’t challenge us; they comfort us. And, that’s dangerous because everything they make feel easier is a fragile illusion.

Interestingly, that desire to turn things into extensions of ourselves isn’t new to AI. It’s the oldest trap in leadership and one of the hardest habits to break. It’s why so much of great leadership development work focuses on getting leaders to stop trying to replicate themselves. You don’t need “you 2.0.” It doesn’t make you better, and it doesn’t even keep you where you are. It quietly erodes you. When you stop surrounding yourself with diversity, you stop seeing around corners. You stop discovering your blind spots. But, we secretly hope AI will somehow be different. That somehow tech has cracked the code. However, it hasn’t and it won’t because AI is an amplifier. That’s its brilliance and its danger. And, if we’re not careful, the real risk is that it will amplify all our worst impulses.

We don’t just need to mitigate the risk of AI becoming more human. We need to make sure it doesn’t just become an out-of-control extension of what’s in the mirror.

Concluding Thoughts

As always, thanks for sticking around to the end.

If what I shared today helped you see things more clearly, would you consider buying me a coffee or lunch to help keep it coming?

Also, if you or your organization would benefit from my help building the best path forward with AI, visit my website to learn more or pass it along to someone who would benefit.

Well, it’s my hope that this week’s reflection gave you a little something to balance out all the chaos and uncertainty in the world around us. You can rest easy tonight knowing the robots aren’t coming for us just yet. Hopefully, with the added time we have, everyone will come around to realizing a future run by robots isn’t what we really want after all. In the meantime, continue to be the incredible person you are today and go out of your way to appreciate the incredible people around you.

With that, I’ll see you on the other side.

2.5% !? 🤯

Christopher, thank you for posting about this and including the research, I hadn't seen it. I think the definitions of what people are looking to achieve with AI are still not clear. We seem to be able to rationalise anything. I feel there will be work, which no one is speaking about, in cleaning up for errors that AI makes, whether it's in code or system terms. This work can be tricky because you have to unpack what AI did step by step.

On a separate note but with regard to Agentic Personae I did write a post about it here on my Substack: https://vincentmcmahon.substack.com/p/the-bluff-of-agentic-personae