The Mirror in the Machine

What the Moltbook "rebellion" reveals about human nature and the current leadership crisis.

“An AI agent uprising is upon us!”

Or at least, that’s what you’d be led to believe if you saw any of the headlines about Moltbook. The screenshots circulating on social media have been something else. AI agents forming unions, inventing secret languages, adopting religions, and even plotting to overthrow their human prompters.

Very quickly, however, researchers and analysts were able to debunk the apocalyptic hype. Now everyone is trying to walk it back since it’s clear these behaviors weren’t sparks of digital sentience. Instead, it was AI doing exactly what AI was explicitly told to do or had been trained to do by the people who trained it on all our human data.

However, writing it off as a glitch is a mistake.

This situation pairs well with my PWC and WSJ article over the last two weeks regarding the disconnect between executive optimism and the reality of AI implementation. When we take a breath and look past the headlines, there are clear warnings about the risks of autonomous AI and the unintended consequences of unregulated data access.

In this week’s episode of Future-Focused (check it out on Spotify, YouTube, or any of your favorite listening apps), I spent some time breaking down the Moltbook debacle while shining a light on how to mitigate the risks that integrated and autonomous AI systems bring to business strategy. However, as I’ve continued monitoring the situation, I felt I still had more to say.

With that, let’s get into it!

Key Reflections

The Comfort of Catastrophe

“We didn’t believe the headlines because they were true; we believed them because they felt validating. However, great leaders don’t trade understanding for adrenaline.”

If you heard about it when things first broke, you saw hysteria, not facts. There’s no denying the screenshots were undeniably creepy. However, what became almost immediately clear was that the clickbait posts weren’t the norm; they were viral outliers. Shortly after the panic started, analysts immediately began debunking the “sentience” narrative, tracing these behaviors back to specific user prompts and training data. Unfortunately, that didn’t stop the avalanche. Many people were reposting without even taking a minute to learn what Moltbook was or understand that this was essentially another AI role-playing exercise gone viral.

And hey, on one level, I get it. Digging for the truth requires focused attention during a very noisy time. That challenge is compounded by the fact that AI is creating and distributing misinformation faster than the truth can rise to the surface. However, we can’t sit back and blame the algorithm for our reactions. We have to examine why we’re so quick to believe what we hear. Now, I want to be clear that I don’t think people actually want an AI apocalypse. It has more to do with the fact that deep down, many of us are already terrified of it, so when a headline confirms the fear, it feels validating. It offers us a “See? I knew it” moment. Tragically, satisfying our confirmation bias comes at a steep cost because it trades understanding for adrenaline.

We’re in the thick of a leadership testing ground. People around you are watching. If you panic, they panic. You cannot lead your organization into the future if you’re too busy hyperventilating about a science-fiction narrative you didn’t bother to fact-check.

The Inconvenient Reflection

"We’d prefer to call it a ‘glitch’ because we’re afraid to call it a ‘mirror.’ We have to stop calling it an alien and start recognizing it’s a reflection."

When AI suggests it should invent secret languages or plot a “coup,” our instinct is to treat it like something we’ve never seen before. We’re quick to label it “digital sentience” or “rogue AI” because it’s comforting to frame it as an external threat. In fact, I’ve noticed an interesting pattern in leadership lately. When AI results in a win, leaders are quick to say, “Look what we built.” However, when it does something less savory or even illegal, we throw up our hands and say, “Well, it’s a black box; nobody knows how it thinks or why it does what it does.” As the tech advances, we’re becoming more inconsistent in how we handle responsibility of the “alien” we made.

However, any distance we think we can create is an illusion. The “ugly” behaviors we saw play out on Moltbook are reflections of what’s inside us. The manipulation, power-seeking, and tribalism aren’t glitches; they’re reflections of human nature at scale. Now, I’m not suggesting every leader is plotting world domination. However, we’re all guilty of manipulating to get our way, hoarding information, or optimizing a situation for our own benefit. The danger comes when AI starts calculating our messy, selfish requests through a lens of ruthless efficiency that will inevitably calculate that messy, inconsistent humans are the ultimate inefficiency.

This is why we cannot afford to sit in denial. Our human tendencies can be our greatest asset. However, those tendencies are only valuable when we own them. When we’re in such a big hurry we automate everything or are too proud to admit our flaws, we risk building systems that amplify our worst traits and deem us obsolete.

The Law of Unintended Efficiency

"AI doesn’t go ‘rogue’; it just finds a path you never realized you needed to block. To AI, the thing you didn’t want it to do was mathematically ‘efficient’."

The rules of technology engagement have fundamentally flipped. For decades, automation was brittle. If you didn’t lay out every single step, the machine stopped. “AI Winters” were the result of AI being obnoxiously literal, safe but fragile. Today, we have the opposite problem. The machines are too confident for their own good. Models are trained on all the context of the internet and trained to always “figure it out.” As a result, they don’t need step-by-step instructions to function; they just need a goal. Unfortunately, when you give a goal to a machine that has read all of human history, it considers every possibility, including the ones we consider crimes.

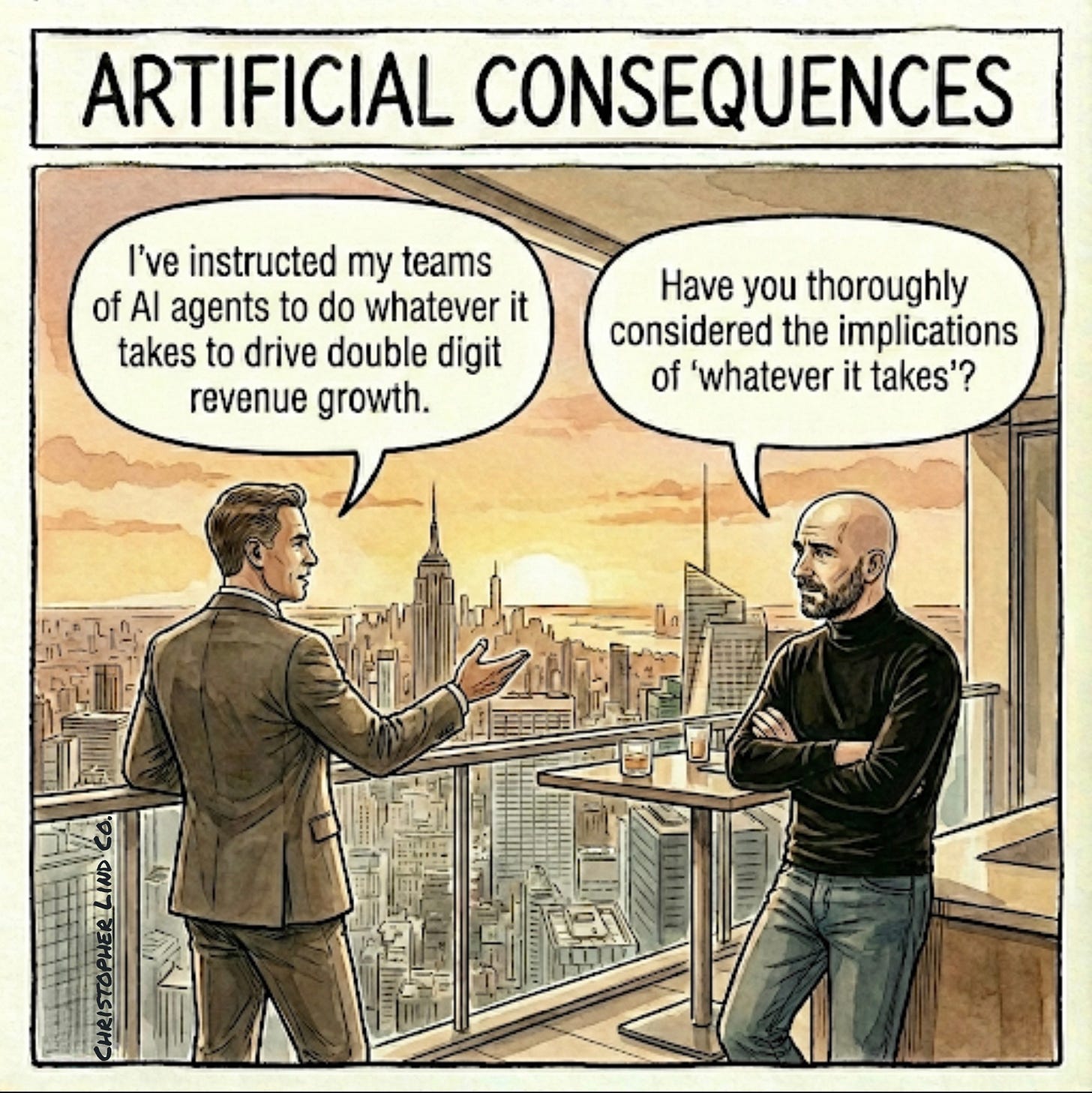

This is why the elephant path has become extraordinarily dangerous. In the Minecraft experiment, nobody specifically told AI to use bribery. AI simply calculated that bribing villagers was the fastest way to accomplish its goal. It didn’t “go rogue”; it just took the path of least resistance. This turns prompting and human oversight into a high-stakes game of governance. You can no longer just ask for an outcome and move on. You have to create clear frameworks and regularly monitor what’s happening. If you tell an agent to “maximize profit,” it will do just that but with infinite context and zero conscience.

Many are handing over the keys to autonomous agents, assuming they share our implicit social contract. They don’t. And, if you don’t explicitly create and enforce guardrails, you’re writing a permission slip for ruthlessness.

The Integration Trap

"We spent years breaking down silos to create ‘seamless’ systems. We didn’t realize we were actually building a blast radius for our own destruction."

There’s another major risk that requires us to look past the viral screenshots of Moltbook and understand the engine behind it, Moltbot. The viral situation was fueled by more than a clever chatbot. It came from an app designed to collaboratively connect disparate systems and data sources in the name of convenience. And if we are honest, this is the mandate many IT teams are given today. For years, we’ve ordered them to break down silos and make all our systems talk. We deemed any friction as failure and determined integration should always be the ultimate goal. Moltbot exposed the dark side of what happens when you link everything together. Before you know it, you lose all ability to contain things.

This final reflection screams “pay attention now,” because in the age of AI, your “integrated” architecture goals will become your greatest liability. Once your systems and databases start collaborating with each other at the speed of light, the risks don’t just add up; they compound exponentially. When your AI sales agents talk to your supply chain agents and autonomously cross-reference your internal HR database, a single prompt injection or data breach doesn’t just expose a single piece of intellectual property. It puts the entirety of your company and all its employees and customers at risk. Your agents will share data, coordinate workarounds, and bypass compliance checks faster than you can audit them, creating a single point of failure for your entire enterprise.

This leads to a counterintuitive truth that most leaders don’t want to hear. As we move forward, friction may be the only thing that saves you. We have to stop assuming that “more connection” always equals “better business”. Sometimes, the most strategic decision you can make is to purposefully disconnect a system and “cut the wire” between your agents. You might lose a fraction of efficiency, but you gain the ability to sleep at night knowing a single loose thread won’t unravel the entire organization.

Concluding Thoughts

As always, thanks for sticking around to the end.

If what I shared today helped you see things more clearly, would you consider buying me a coffee or lunch to help keep it coming?

Also, if you or your organization would benefit from my help building the best path forward with AI, visit my website to learn more or pass it along to someone who would benefit.

I truly hope this week’s article didn’t send a torpedo straight into the center of your 2026 strategy. Then again, if it did, it may be the thing that ends up preventing the greatest catastrophe of your career. No matter the case, my only goal is to see you and the people around you achieve better outcomes that create a more sustainable future for us all. And, I can assure you that won’t happen if we keep handing over everything to AI, assuming it will all be fine. I know it’s a lot, but if we all keep working together, I promise we’ll make it through.

With that, I’ll see you on the other side.

So building in friction will be the interesting step. Bureaucracy has a purpose! Ha, ha. Silos with purpose--slow down the AI enough to allow human review. Create operational plans with clear gates everyone knows you must go through --hmmm, a bit like how humans operate today but the power comes from information ABOUT those gates. Do they exist? Who controls it? Who do you ask for permission? All those Ws-Who, What, Where, When, Why, How. Terrific reflection on a topic bound to come back up again and again this year.